Thinking with AI through art history and social justice

Over the last few years, we’ve seen a familiar story replay itself. From trains to electricity, telephones, radio, the internet, social media…when a new technology arrives, there’s excitement; fear; then calls to ban it. In the arts, this has reached a fever pitch around AI. The outcry is understandable: the speed is dizzying, the extraction opaque, the power asymmetries grotesque. But we live inside a long history of art experiments with automation, chance‑based systems, and machine‑driven production, so I worry that rejecting AI outright risks throwing out the ethical questions along with the tools.

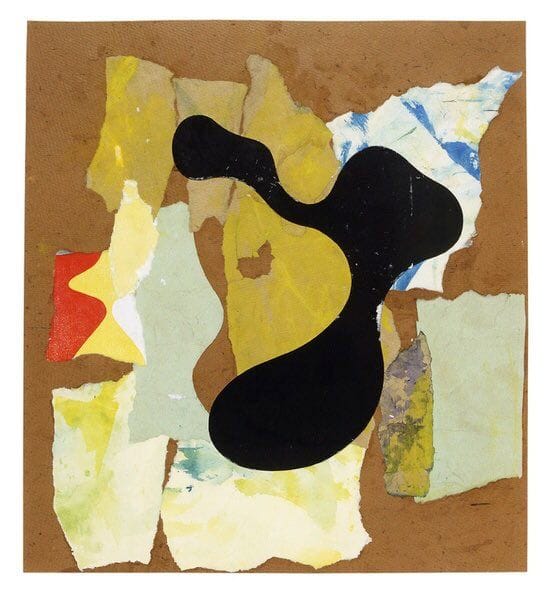

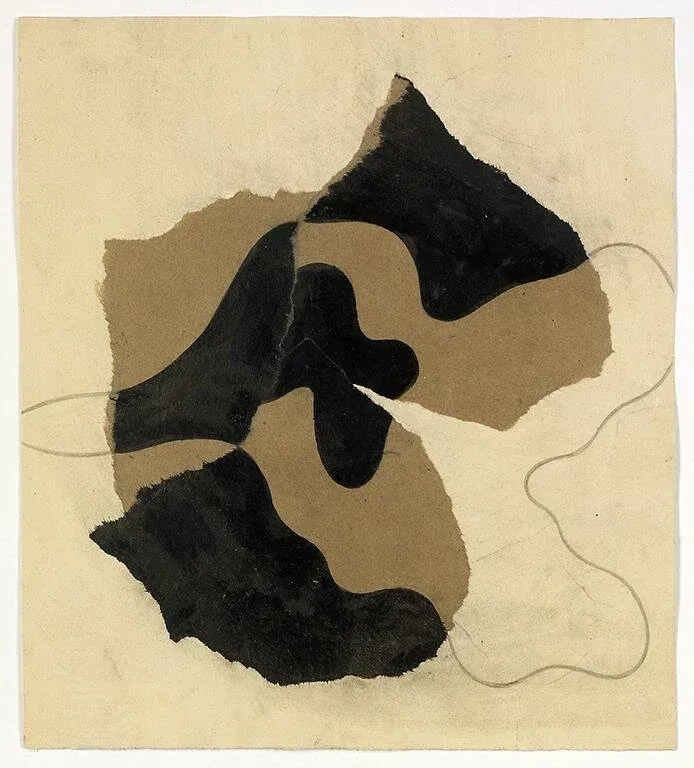

Hans Arp

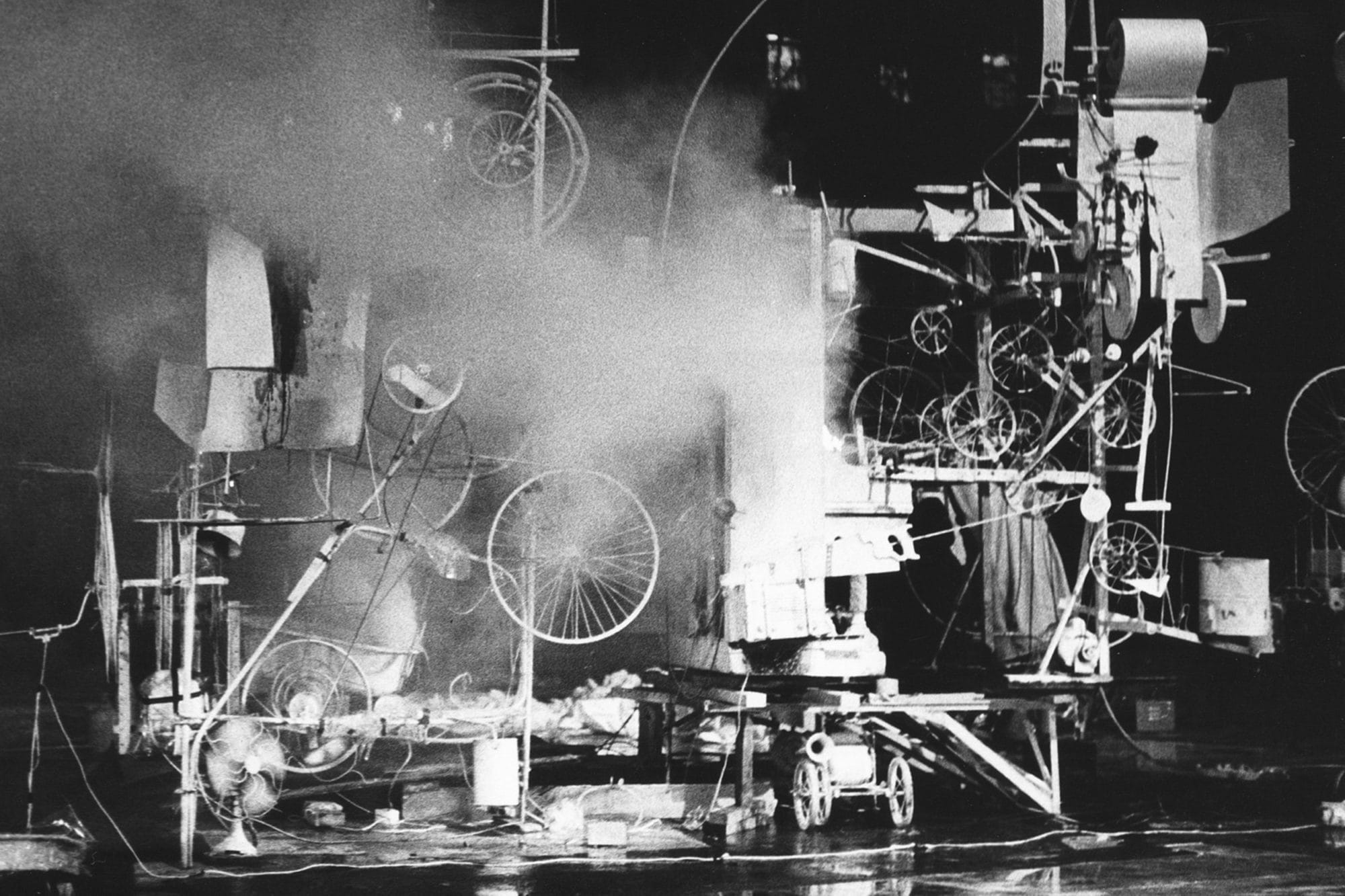

From Dada’s chance operations and Arp’s torn‑paper compositions to Jean Tinguely’s self‑destructing machines, artists have long tested the edges of authorship and control. Sol LeWitt turned the artwork into a set of instructions, where the hand of the maker could be anyone’s, and Harold Cohen’s AARON framed the computer as a collaborator rather than a mere tool. In this lineage, AI can be read as a new phase of an older inquiry: not a rupture, but an intensification of the questions about who decides, who benefits, and whose labour is assumed.

This is where the Forge Organizing article “Power, Not Panic: Why Organizers Must Engage with AI to Build the Future We Deserve” feels like a crucial anchor. It argues that “disengagement does not protect us. It just reinforces the systems we are trying to dismantle,” and insists that AI is not a savior or destroyer, but a terrain of power that can be studied, contested, and reshaped. The piece calls for organisers to treat AI like any other political terrain - policy, narrative, strategy -inviting us to look towards frameworks like Afrofuturism and cyborg feminism that ask: Who designs this? Whose futures are foreclosed, and whose are expanded?

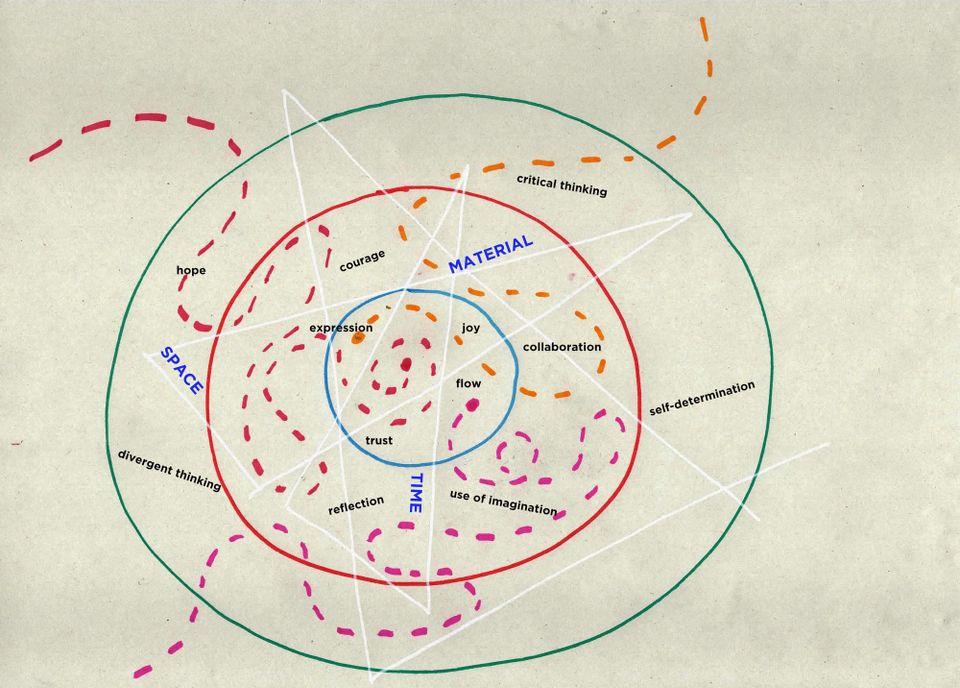

For me, that lands in a place of deep uncertainty and curiosity and also a very personal contradiction. I use AI, and I’m not entirely comfortable with it, but I’m also not entirely prepared to let go of it either. In my own life, AI has become a quiet accomplice to my neurodivergence and the kind of administrative labour I’d otherwise struggle with. It helps me structure proposals, summarise large text and tidy up grant writing so that tasks that used to take me hours now take far less. That doesn’t magically erase the labour, but it does reduce the stress and the cognitive load. In an odd way, it frees up space and time for the things I care about most: being present with my family, making music, being in community, showing up in relationships, and doing the quieter, relational work that underpins so much of my practice and activism.

At the same time, I’m always aware of two simultaneous truths: on the one hand, AI is a tool that can be used sparingly and with care; on the other, it is embedded in systems that are deeply extractive and inequitable. I don’t want to pretend that my individual use of AI is neutral or innocent, but I’m also unwilling to pretend that not using it at all would be either feasible or ethical, given that it already shapes the worlds I’m trying to move through. That tension is hard to reconcile, and I think it’s meant to be. It forces me to keep asking: What am I asking AI to do, and whose labour is being hidden from that request?

Two contemporary artists whose work feels close to this kind of critical, relational engagement with AI are Rashaad Newsome and Kiki Shervington‑White. Newsome’s work with Being, a social humanoid AI installation, imagines an AI that is not gendered, racialised, or fixed in a single identity, but instead designed to “teach” and “learn” alongside humans, inviting audiences to confront their own complicity in systems of oppression. His project is not about creating a flawless machine, but about using AI to stage a kind of anti‑biased mirror, asking who gets to shape the values that drive these systems. In a similar spirit, Shervington‑White’s AI‑driven film work exposes how AI can embed and reproduce inequalities, but does so through participatory processes that put marginalised voices at the centre of the design and critique. Both artists use AI not as an end‑in‑itself, but as a way to interrogate power, visibility, and relational responsibility.

Also, a special mention of artist Julie Freeman (based in my home town, Margate), who is leading research practice that asks: what does this technology do to our relationship with the living world, and when is it worth using at all? She emphasises responsibility over hype and describes her practice as translating complex natural processes and live data into artworks, and she frames her work as questioning how technology shapes how we translate and perceive nature.

For me, what holds these examples together is care: care for the people whose data and labour underpin AI, care for the communities that are most vulnerable to its harms, and care for the relationships that actually sustain social change. This is why I’m drawn to the Forge article’s insistence that AI is not something we can afford to “opt out of,” but something we must learn to organise around, like any other political terrain. The question is not whether AI should exist, but under what conditions it is governed, who is resourced to shape it, and how artists and organisers can use it to redistribute time, labour, and creative capacity rather than centralise them.

I’m also aware of the many artists and designers who say, “Don’t let AI write for you. Don’t let it make art for you.” I understand the impulse behind that: we don’t want AI to flatten our voice, homogenise our work, or erase the labour that makes art meaningful. I agree that AI shouldn’t just be “making” art for us without any critical engagement or responsibility. But I’m also uneasy with the way those outcries can sometimes feel like a blanket moral purity test, without fully reckoning with the ways that technology has always shaped how we make art, think ideas, and organise our lives. Within the context of social justice organising, where resources are thin and time is short, AI can actually add capacity and support if it’s used carefully and critically (see the Forge article for examples). That’s why I think it’s vital that organisers learn how to use AI alongside their values, not in place of them: to extend human activity, not replace it, and to do so with the humility of knowing that every technical tool carries political weight.

What I’m left with is a desire to move together with clearer intentions. I’m not ready to declare AI an ally, but I am ready to say that disengagement is not a strategy. I want to learn alongside others, artists, organisers, technologists, care workers, about how we might shape AI practices that are grounded in social justice, equity, and collective consent. That means saying no to some tools, yes to others, and always returning to the question: Who is this for, and whose power does it reinforce?

Art has always been a site where authorship is negotiated, destabilised, and reimagined. If AI can be included in that lineage, then our task is not to abandon it, but to insist that it earns its place by serving the same commitments to justice, care, and collective imagination that have animated our work long before the models arrived.

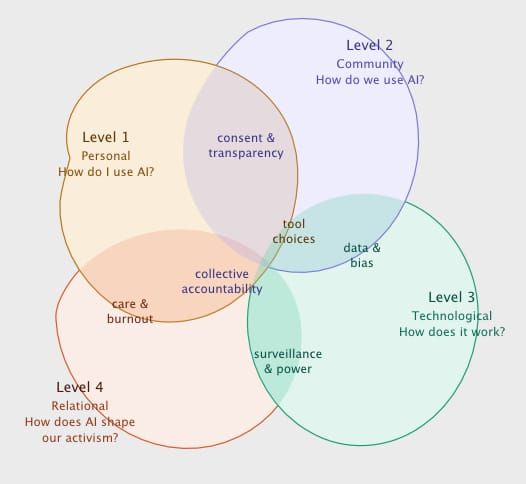

ps. I’m working on something to help me examine my own and organisational use of AI, about how to engage with AI constructively, moving from the personal through to the relational and political. I’ll share that here soon.