Researching how we live with AI: a social justice led framework

This piece is a research‑led companion to the last one. In that article I sat with the tension between wanting to reject AI outright and realising that disengagement is not a strategy, especially when AI is already shaping the worlds of artists, organisers, and care workers. But I want to hold that alongside another claim, one I find equally important: that AI is not inevitable.

That phrase comes from Timnit Gebru, founder of the Distributed AI Research Institute (DAIR), who argues that the sense of AI's unstoppability is itself a political construction, manufactured and maintained, not a neutral description of technological progress. Her working position isn't pure refusal, but something more honest: work out where AI might be genuinely beneficial, raise the alarm where it is causing harm, and be willing to stop it where it's doing more harm than good. What I find useful about this is that it refuses both uncritical adoption and principled withdrawal. It stays inside the question.

"Disengagement is not a strategy" and "AI is not inevitable" are not opposites. They are two sides of the same critical engagement: stay present, but never let the question be settled for you by people whose interests are not yours.

What I'm offering here is a way of folding AI‑engagement into existing social justice practice, not a new framework on top of the pile, but a lens you can lay over the ones you're already using.

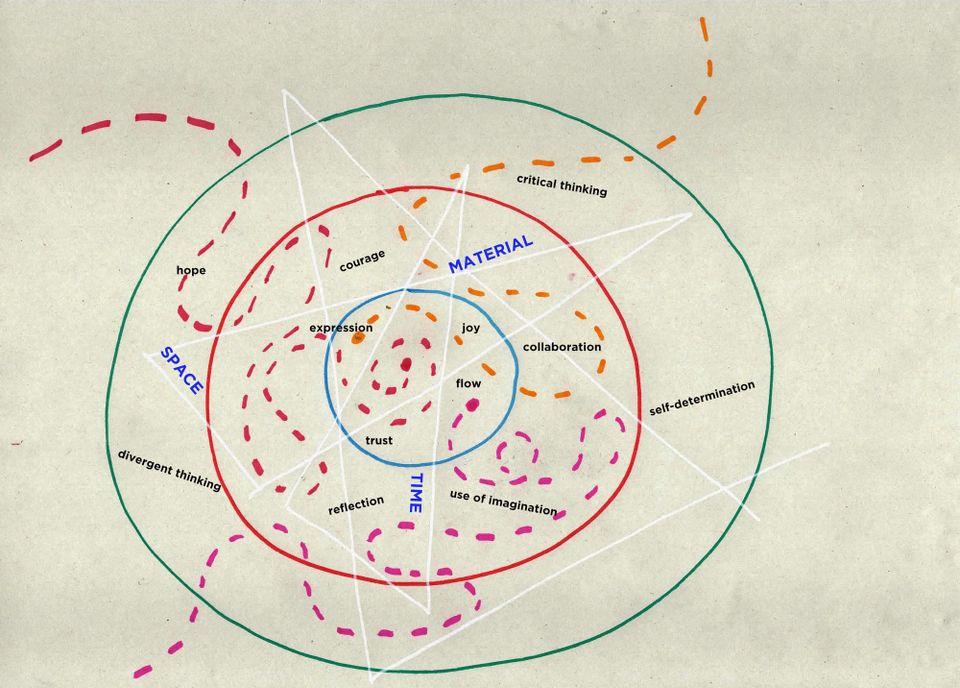

Frameworks we're already working with

Many organisers and movements already have strong tools for thinking about power, relationships, and strategy. Rather than reinventing those, I want to briefly name a few and flag the AI‑specific question each one opens up.

Bill Moyer's Movement Action Plan maps how movements move through stages from normal operation to new normal, and distinguishes four activist roles: rebel, change agent, citizen, reformer. AI question: at what stage of our movement are we using AI, and which roles are most affected?

The Momentum Cycle focuses on escalation, active popular support, and absorption into co‑optation. AI question: is AI amplifying escalation or deepening absorption and surveillance?

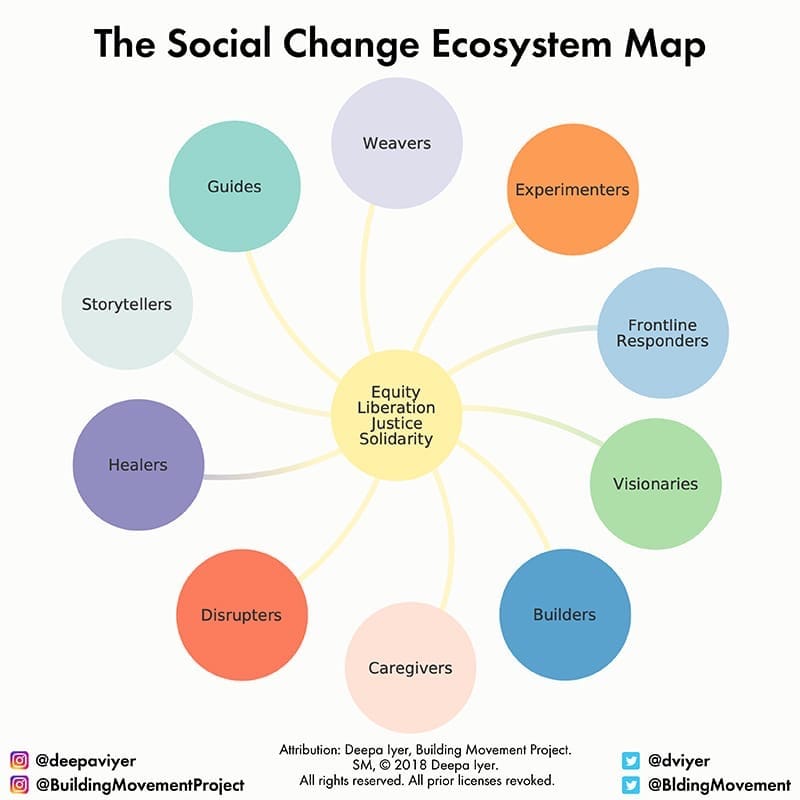

Deepa Iyer's Social Change Ecosystem Map identifies ten roles in social change - builder, storyteller, caregiver, disrupter, experimenter, visionary, and more. AI question: does AI help us play our roles more wisely and sustainably, or does it flatten difference and push everyone toward the same productivity logic?

Dean Spade's Mutual Aid distinguishes survival work that is participatory and solidarity-based from work that reproduces dependency, surveillance, or charity‑logic. AI question: is AI‑assisted care deepening grassroots mutual aid, or quietly stabilising the institutions Spade critiques while extracting our data and labour?

Sasha Costanza-Chock's Design Justice framework repositions designers not as decision-makers but as facilitators who contribute skills in service of communities rather than on behalf of them. It insists that the values, practices, and narratives embedded in any designed system are political choices, not neutral defaults and that those choices almost always reflect the perspective of whoever was in the room when the thing was built. AI question: who decided how this tool was built, trained, and deployed, and whose community is being "served" without being asked?

Global Majority and Global South-led research, including Care International's work on AI and the Global South, The Engine Room's guidance for CSOs, and UNDP's community listening projects, foregrounds voices and risks that Northern-centred frameworks tend to miss. AI question: Which communities are being erased or exploited?

My own framework sits within this field of practice. It's designed to be adapted, critiqued, or discarded according to your own priorities.

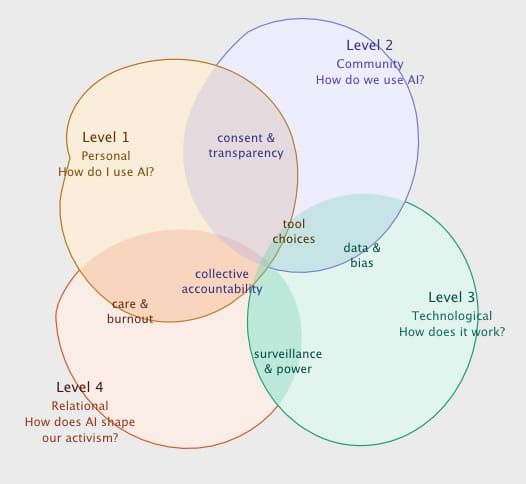

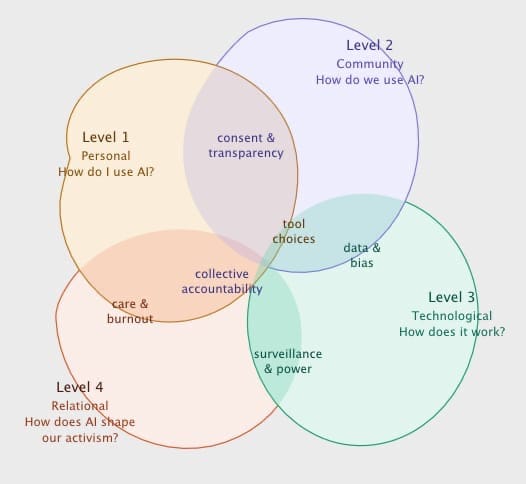

A five level framework for social justice practitioners

These existing frameworks are already doing the heavy lifting. What I'm adding is a way of running AI‑engagement through them, a reflective tool with five levels, designed to help individuals and organisations assess how and why they currently use AI, notice what feels right and what feels uneasy, and monitor how that changes as tools evolve.

The five levels are personal, community, technological, relational/political, and ecological. Each is offered as a set of questions, usable in one‑on‑ones, team meetings, or as part of a research project.

Level 1: The personal - how do I use AI?

This is about your own relationship with AI: what you ask it to do, where you draw lines, and what consent you're giving or assuming.

- What do you use AI for - drafting, editing, summarising, translating, generating images?

- Where does it feel like support, and where does it feel like substitution or erasure?

- Are there decisions or creative acts you keep firmly in your own hands?

- How transparent are the tools you use about data, training sources, and ownership, and can you realistically refuse them?

This is where you develop an inner "AI ethics compass" based on your own experience and values. It's also where contradictions become visible: AI can reduce cognitive load and still feel ethically fraught. Both things can be true.

Level 2: The community - how do we use AI together?

The question shifts from "how do I use AI?" to "how do we use it, and with whose consent?"

Design Justice offers something most AI ethics guides miss here: the insistence, borrowed from disability justice, that there should be nothing about us without us. Most AI tools are built with a default user in mind who is not us: not neurodivergent, not Global Majority, not time poor, not working in underfunded social movements. Even well-intentioned tools reproduce harm when they're built without the genuine participation of those they claim to serve.

- Has your group talked openly about AI use in communication, documentation, or creative work?

- Are there shared norms or an "AI use charter" for what's acceptable and what isn't?

- Does AI reduce overwork and burnout, or increase pressure to produce more, faster?

- When you experiment with AI together, do you pause to ask: who benefits, who is at risk, who is being made invisible?

- Are people able to shape or refuse these tools collectively, or is "choice" more theoretical than real?

Level 3: The technological - how do we understand AI?

The question here is less "can AI do this?" and more "how does it work, who is it working for and does it need to exist at all?"

We are already inside AI-driven systems: recommendation engines, search autocomplete, route-planning, customer service chatbots. The question was never really whether we use AI; it's how conscious we are of where it's already shaping our habits, desires, and decisions. Part of this level is learning to notice that low-visibility AI before assessing the tools we are actively choosing.

And Gebru asks something I think is worth sitting with: why start with AI? When people talk about AI for social good, have they actually worked out whether AI is what the problem needs, or is it the available hammer? This isn't an argument against AI. It's an argument against its inevitability, and a prompt to ask: what would we do here if this tool didn't exist?

- How well do you and your colleagues understand how AI is trained, what data it uses, and how bias gets embedded?

- Are you experimenting in ways that let you observe its effects, what it distorts, misses, amplifies, before rolling it out widely?

- Are you pushing for clearer rules around consent, ownership, and the right to refuse AI in your institution or network?

- Is AI actually the right tool here, or are you reaching for it by default?

Level 4: Relational / political - how does AI shape our relationships, power, and social justice work?

This is the core of a social justice led lens, where anti‑racism, solidarity, and mutual aid intersect with power.

AI is not neutral. It tends to reproduce and intensify systemic racism because it is trained on historical data that already encodes discrimination, in policing, housing, hiring, healthcare. But it can also be used to surface disparities, support community driven reporting, or enable accessible, language-appropriate tools for Global Majority led organisations. The question isn't simply "is this AI racist?" It's whose vision of the world is this AI optimising for and do we want to reproduce that vision?

Design Justice adds another layer here: the spiral of exclusion. Technology built by and for dominant groups doesn't just fail marginalised communities, it actively narrows what's imaginable for them. So the politics of AI aren't only in its outputs. They're in who was in the room at the design stage, whose data trained it, whose knowledge it treats as authoritative.

And running Spade's mutual aid lens through this level sharpens everything: is AI assisted work collective, participatory, and care centred, or is it top down, surveillable, and extractive, quietly stabilising the very institutions we're trying to change?

- Does AI deepen surveillance over organisers, workers, or communities, or does it reduce the burden of over-documentation that accountability can carry?

- Are we deepening mutual trust and participatory decision-making, or reinforcing top-down, surveillable services?

- Who in our community or networks is most likely to be negatively impacted, through surveillance, misrepresentation, or data colonisation?

- Have we explicitly consulted Black led and Global Majority led partners about how they experience AI-related risks and opportunities?

- Is AI-use redistributive and reparative, or reproducing extractive and racist infrastructures?

Level 5: The ecological - how does AI affect the environment and our relationship to the earth?

AI is not immaterial. Training large models and running AI heavy infrastructure consumes vast amounts of electricity and water, draws on fossil-fuel-intensive grids, and places pressure on local ecosystems. The minerals that make it physical are extracted, unevenly, violently, from the Global South. There is genuine work on "AI for sustainability," but those narratives tend toward greenwash unless paired with honest accounting of AI's own footprint.

The "AI is not inevitable" argument matters here too. If AI is a choice, so is the ecological cost it carries. The decision to use a particular tool is also a decision about which extraction chains we are willing to draw on. That's not a reason to refuse AI entirely, but it is a reason to use it deliberately, sparingly, and with honest reckoning.

- What do we know about the energy, water, and hardware footprint of the tools we use?

- Are we choosing smaller, more efficient models where possible, and questioning whether every task needs generative AI?

- Who bears the ecological cost and is it the same communities bearing other costs in our networks?

- Do we have a way of scaling back if it becomes clear our AI use is contributing to environmental harm?

- Is AI helping us care for the earth, or reinforcing the faster-bigger-more logics of white supremacy culture that deepen ecological breakdown?

An unfinished framework for an unfinished question

I want to be clear about what this framework is and isn't. It doesn't resolve the contradiction I named at the start, I still find AI ethically fraught even when I find it useful. What it offers is a structure for staying honest about that: a way of making the question visible, returning to it regularly, and refusing to let it be answered by default.

The contradiction doesn't go away. The tools we use are owned by people whose interests are not ours, trained on data taken without meaningful consent, and powered by infrastructure that exacts a cost borne primarily by communities with the least say in any of this. At the same time, using them reduces my cognitive load, frees up space and capacity to be more present with other humans. That's the tension. I don't think frameworks resolve it. I think they just make it harder to look away.

This is a work in progress. I'm offering it to be tested, adapted, and argued with, especially by people whose experience of AI's risks is more direct and less theoretical than my own.

Next: I’ll be sharing a suggested facilitators guide to using the framework in your organisation.